ControlNet was released early this year and lets you ‘control’ image generation in Stable Diffusion images with normals/depth maps, face poses, globally aware inpainting, and more. Learning how to use ControlNet has been on my to-do list for some time, but I lacked a project interesting enough to really get started on that.

If you want a good general overview and guide for using ControlNet, take a look here.

… in life the QRCodeMonsters win

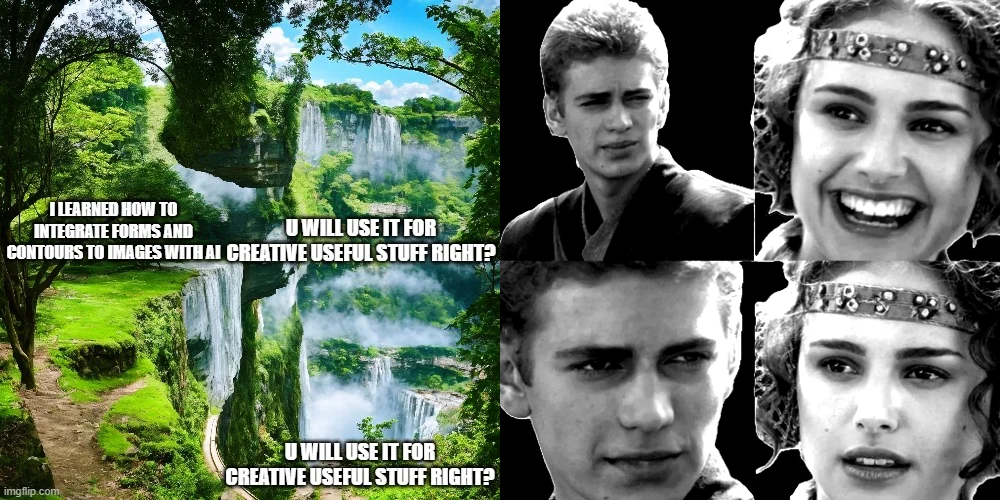

I first found QRCodeMonster after seeing this meme on the Stable Diffusion Reddit and being curious how they got that working.

QRCodeMonster is a ControlNet model used with a modified meme image to generate what you see above. It is intended to be given a QR code with a grey (#808080) background to include in the generated images, but you can see it also works on other shapes and images, so long as they’re in greyscale with the backgrounds removed. You can see the ControlNet input image used next to the meme above.

QRCodeMonster is a ControlNet model used with a modified meme image to generate what you see above. It is intended to be given a QR code with a grey (#808080) background to include in the generated images, but you can see it also works on other shapes and images, so long as they’re in greyscale with the backgrounds removed. You can see the ControlNet input image used next to the meme above.

In the rest of this post, we’ll take a quick look at generating prompt-driven creative QR codes like those you see in the QRCodeMonster’s model page. I’ll share some tips I learned through this process and some good resources I used too.

Getting started

As always, we’ll be using Automatic1111 as the base system for generating images with Stable Diffusion. If you’re not familiar with setup, follow the instructions in the repo’s readme.

You will also need the ControlNet extension for Automatic, which you can find here along with installation instructions. Make sure you also download the models and move them to the correct location

For better and more interesting styles, I also suggest using a different checkpoint than the default SD 1.5 model. The generations in this post use Edge of Realism, but you can find other checkpoints on CivitAI too.

Finally, we need to download the QRCodeMonster model and place it in the models/controlnet Automatic folder along with the other ControlNet models we downloaded earlier.

Generating QR Codes

Now we need to make our QR code. There are lots of terrible services for this you can find around the web. A few good ones are below.

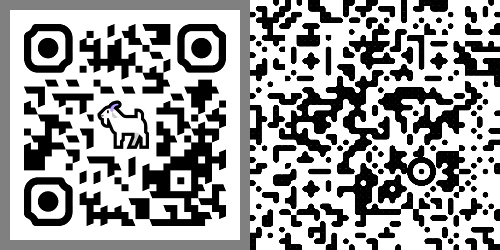

Whichever service you use, make sure you set the module size to 16px. Optionally, you can edit the PNG you get to add a #808080 border which QRCodeMonster will interpret as the background image.

- Anthony Fu’s QR Code Toolkit WebUI extension - This has a ton of features and is built into AUTOMATIC Web UI. Probably just use this.

- Anthony Fu’s QR Code Toolkit - Web page version.

- Nayuki.io - Simple web app

- QR Code Monkey - Use this if you need to put a logo or image in the center of the QR code.

A Note on Masks

You can get really advanced with creating the controlnet mask(s), which we won’t cover in this post. There’s some good information on antfu.me about creating more advanced masks or blending QR masks with text and other masks.

Using QRCodeMonster

Prompts

ControlNet settings will vary a lot depending on your prompt. If you’re getting terrible generations regardless of settings, try refining your prompt using GPT/PromptHero/etc.

Adding style keywords and making sure the prompt will generate ‘busy’ images will help with generations.

If you want to ‘reverse engineer’ an existing image’s style, you can use CLIP to generate a prompt, but you’ll probably want to refine it yourself and add extra words for style and contrast. There’s one built into Automatic or you can use an online one here.

These are the base prompts I’ve been using for QR generation. Adding this or a similar prompt that adds contrast can help with scanability.

Prompt:

[your subject here], contrast, high key, (best quality, masterpiece, realistic, hyper detailed), vibrant colors, trending on ArtStation, trending on Instagram, (HD resolution, 4k resolution, 8k resolution, ultra high resolution), captivating details

Negative:

worst quality, normal quality, low quality, low res, blurry, text, watermark, logo, banner, extra digits, cropped, jpeg artifacts, signature, username, error, sketch ,duplicate, ugly, monochrome, horror, geometry, mutation, disgusting

Settings

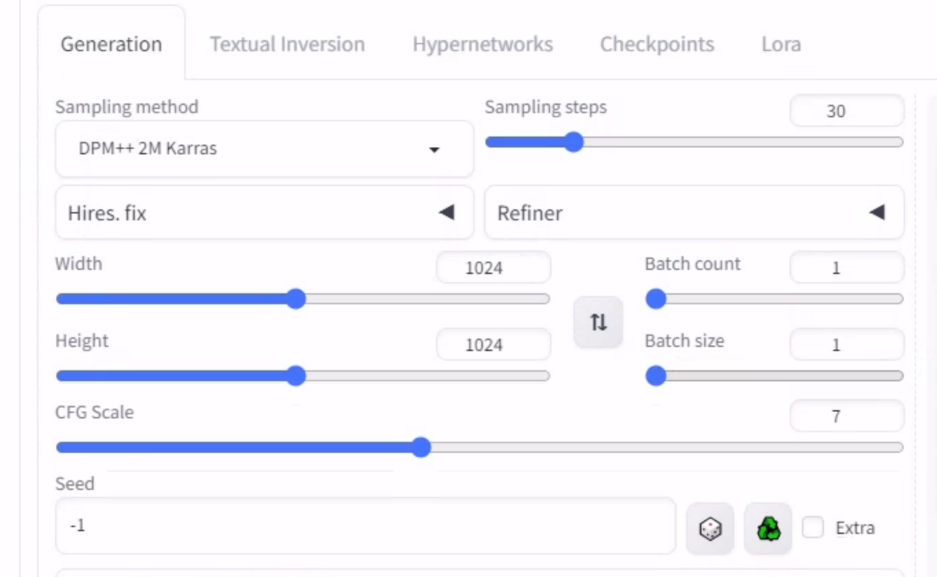

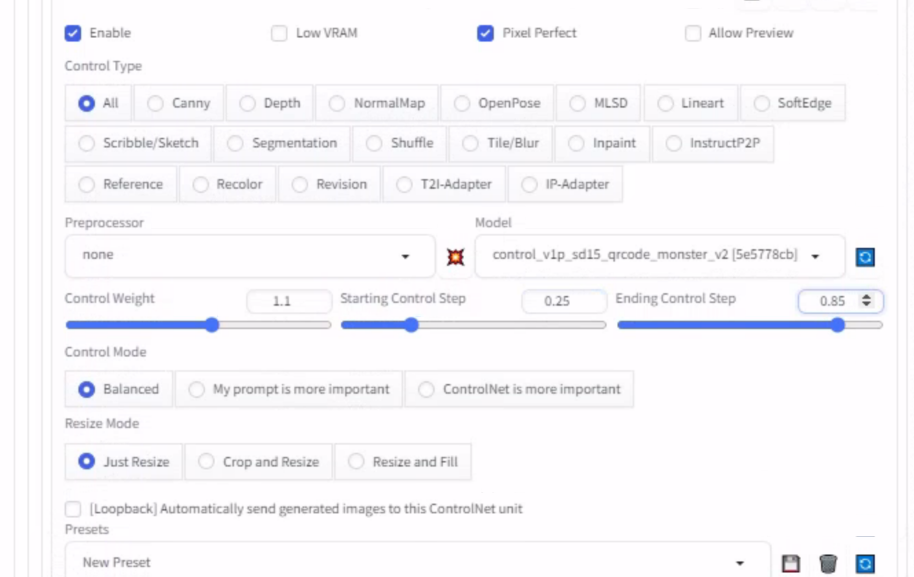

These are the standard settings I start with for QR code generation.

We want ControlNet to run in the middle of the image generation. This ensures the start of the generation only uses the prompt, which will help with the final image’s creativity since we won’t start with the QR code impacting the image.

Similarly, we will want to end ControlNet before diffusion stops, so it can add details without being impacted.

Once you are getting consistent scannable images in a batch, you should generate lots of images and use a scanner to sort through the results. QR-Verify is purpose-built for this! (Thanks for all the tools Anthony Fu!)

Tips:

Control Weightshould usually be between 1 and 1.3. Increasing this will decrease the creativity of your outputs but will increase the consistency/scanability.If you are getting images that look like QR codes but don’t scan, try extending the

ending control step.If you are getting images that don’t look like QR codes, try lowering the

start control step.You may also want to try using Latent upscaling to 1024X1024 from a 512X512 image under

Highres. fixfor different results.Adding and removing steps can have dramatic effects. 25-45 have worked well for me with QR codes.

You can also run QRCodeMonster in img2img which is useful for refining images made with txt2img. The model page has a workflow suggestion for refinement which works well:

Increase the controlnet guidance scale value for better readability. A typical workflow for “saving” a code would be : Max out the guidance scale and minimize the denoising strength, then bump the strength until the code scans.

- You can also upscale using img2img and QRCodeMonster with good results.

Results

|  |  |

|---|---|---|

|  |  |

|  |  |

|  |  |

Final Thoughts

Even though the more creative QR images that don’t look like QR codes are really cool, I think for most uses you do want the image to look like a QR code … That way people will scan it.

You probably also want more control than vague Stable Diffusion prompts and LORAs. This is possible by using another ControlNet module with a different input image, but makes things a lot more difficult to tune to generate good outputs.

In a future post, we’ll look at how using different input images with QRCodeMonster works and the various ControlNet models you can use there.