If you want to skip straight to scripts, click here!

Stable Diffusion Release!

We knew the world would not be the same – A few people laughed, a few people cried, most people were silent.

And just like that, the whole world changes. Stable Diffusion and its openness is a massive improvement to the tools we’ve been using in this space and our ability to modify/understand them.

It was bound to happen, but I am very impressed with both how quickly it rolled out and how powerful the tool is.

|  |

|---|

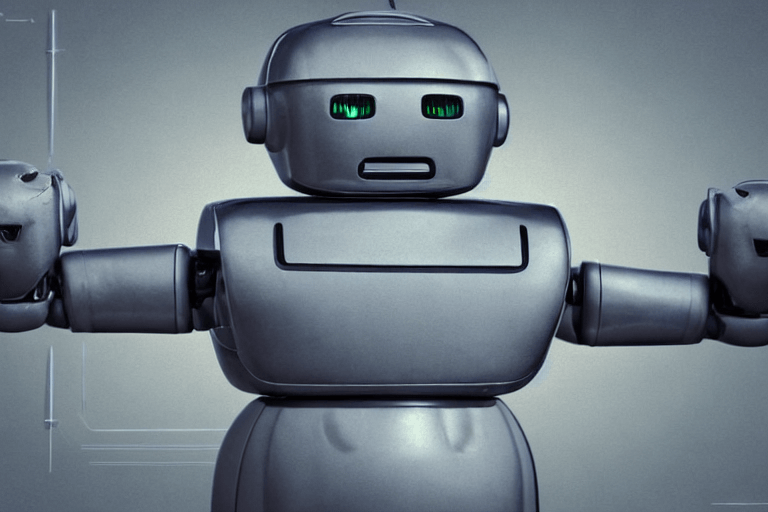

I still have not explored most of the models or played around too much with the new tools added since latent diffusion, but one thing that was hindering me from doing testing at a good pace in the Latent-Diffusion repo was some challenges with the scripting and how the images are output.

No one likes long terminal commands and digging through integer named files – I decided to do something.

|  |

|---|

As often happens with these kinds of things, what started as a simple exercise to build a bash alias ended up in a 2 day journey battling shell syntax and figuring out how to automate image processing tasks.

Making Improvements

I’ve been messing with improving the scripts and tools in latent-diffusion for a couple of weeks. Most of the things I was trying to solve for or had solved still existed in the Stable Diffusion release.

I was able to port some of the changes I made to latent-diffusion to stable diffusion and decided to put some more effort into making simple ZSH commands to run bulk tests for txt2img and img2img and will release them below. Improvements include:

- Sample file names include prompts

- Easier to run with defaults or parameter changes (

btxt2img 4 42 100) - Unique folder output based on ISO dates

- Better grid creation with imagemagick

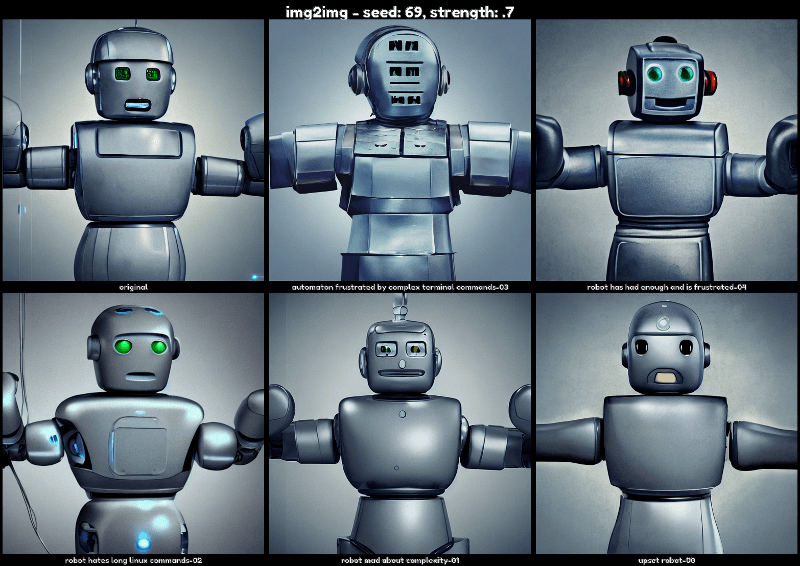

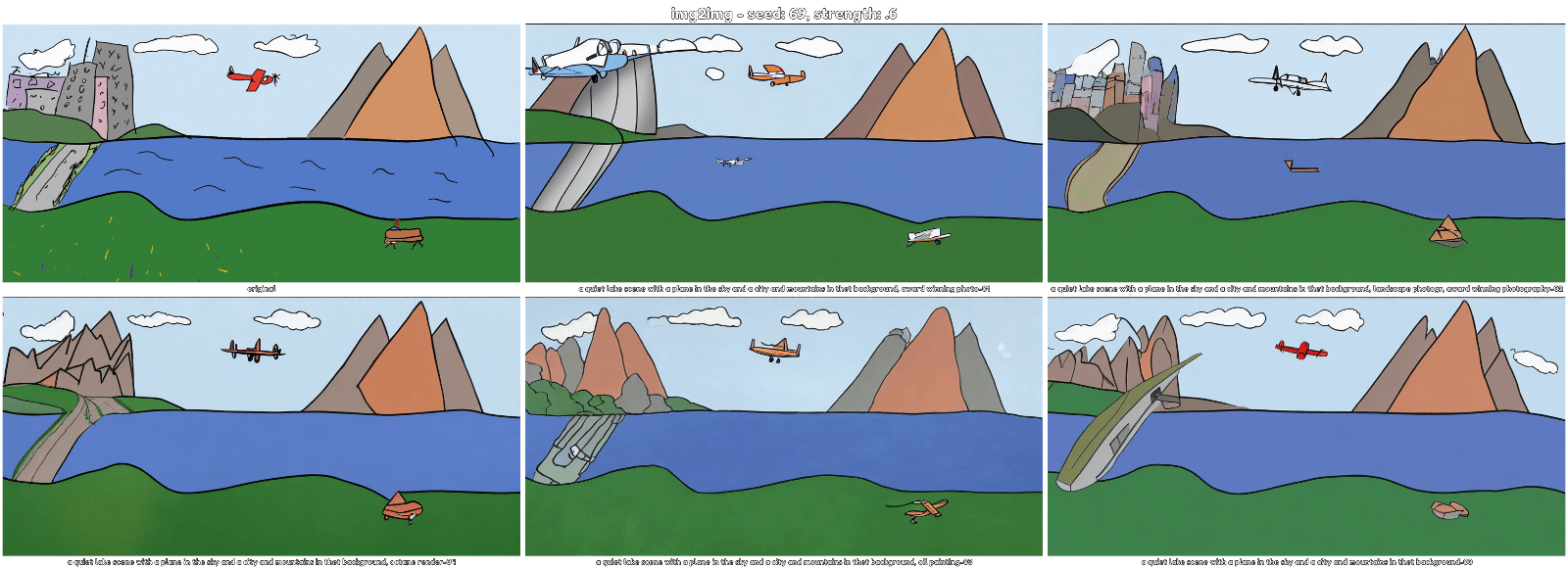

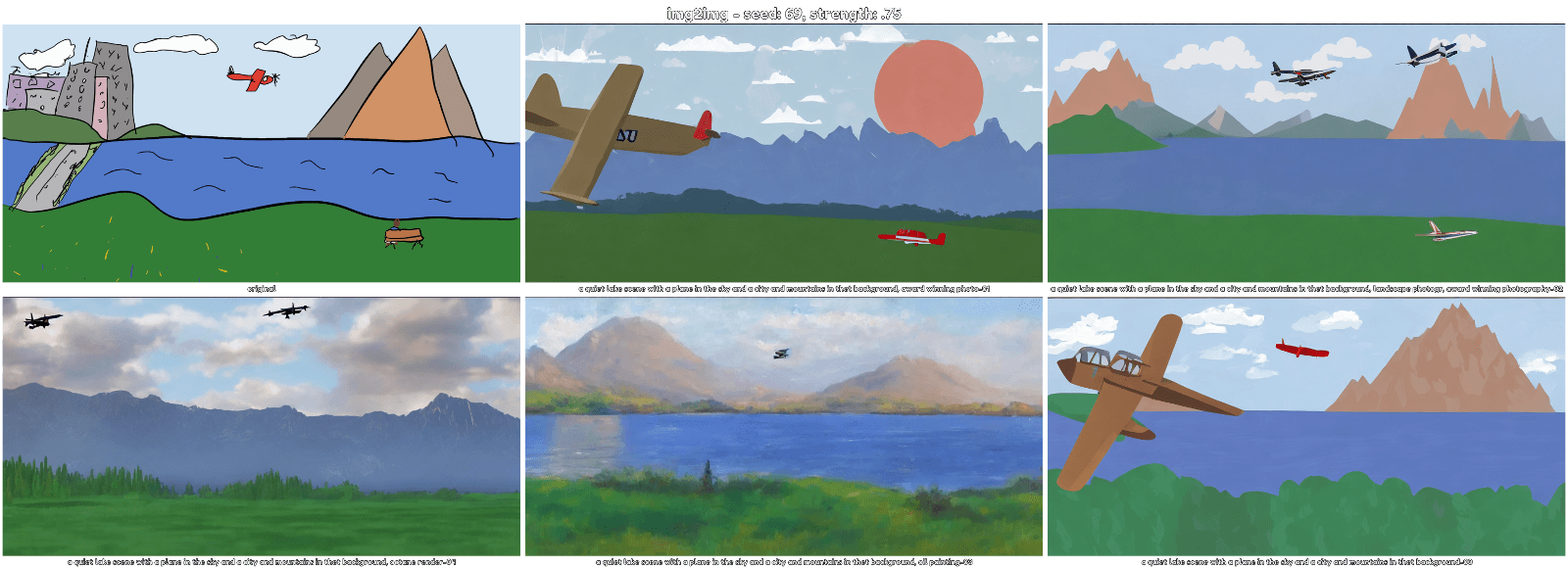

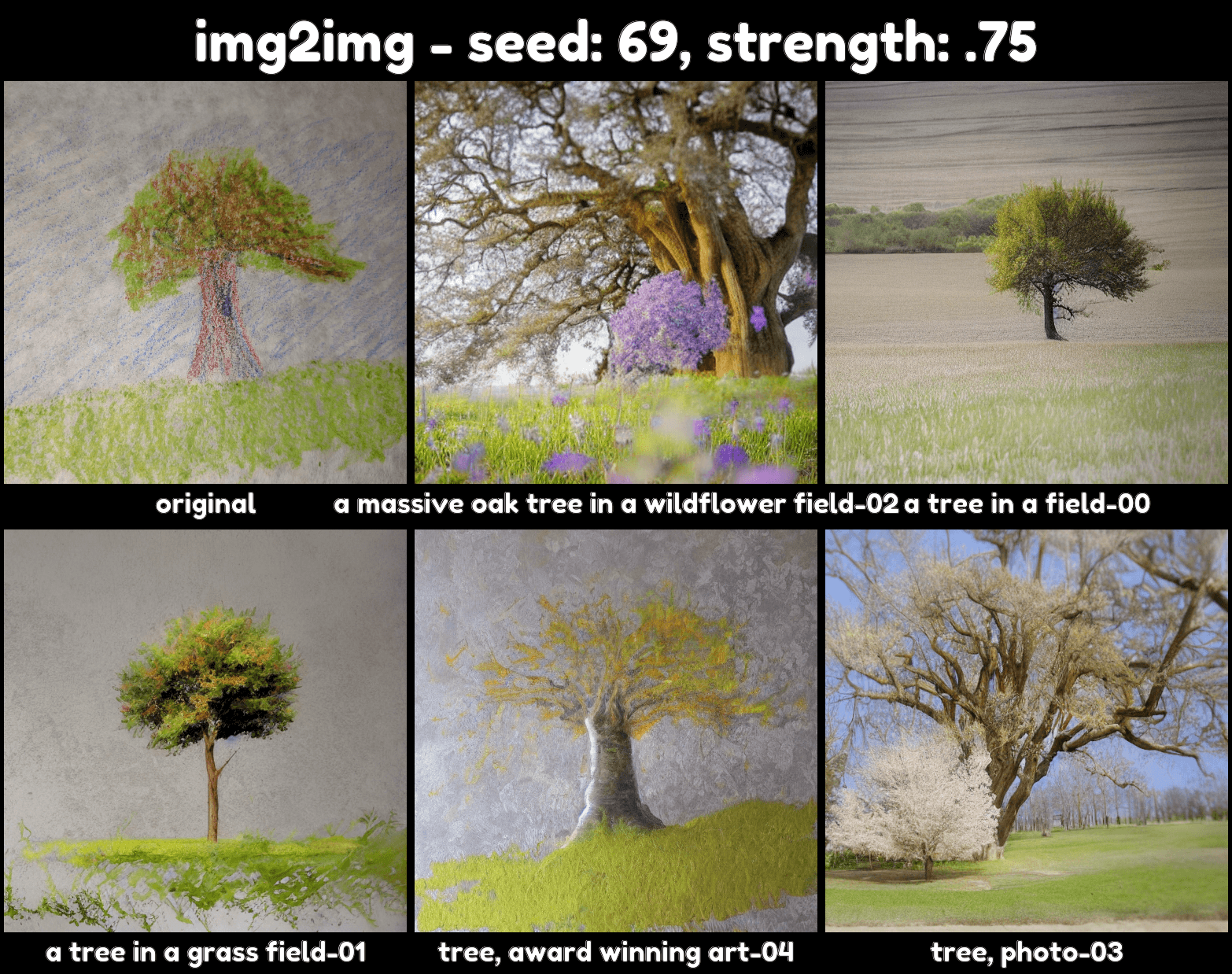

- img2img grid includes original photo and prompt labels for each sample

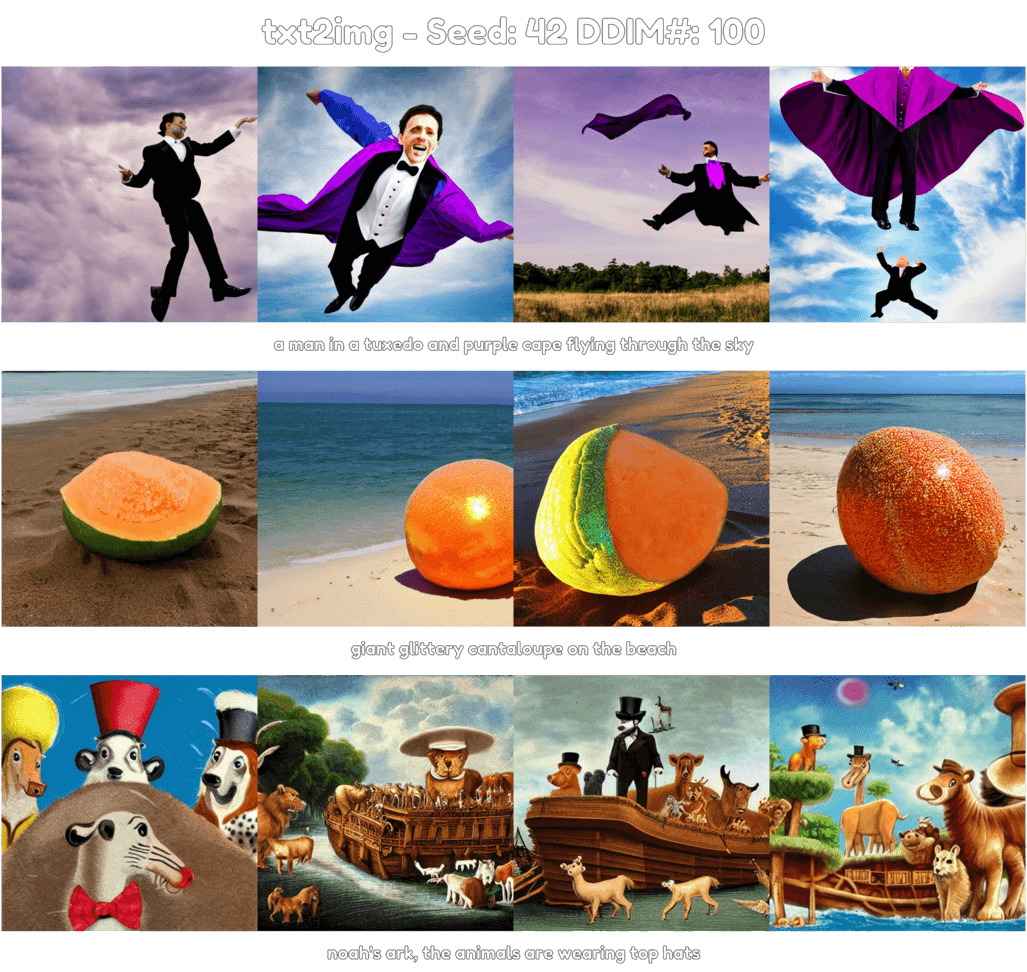

- txt2img grid includes prompt labels and 1 row per prompt

- Grid settings (fonts, colors, borders)

Stable Diffusion Helper Scripts

Stable Diffusion Helper Scripts requires ZSH. I have not tested this in bash, but I suspect a bunch of things will break. If you want to port this to bash or anything else – go for it and please let me know – but, you should probably just download ZSH if you intend to use this.

All the files you need are located in the Github repository.

Instructions and Examples

Img2img Grids with Different Strengths

Txt2img Grids

How to Use

- Clone the repo or download the files

- Copy

txt2img2.pyandimg2img2.pyto yourstable-diffusion/scriptsfolder - Run

cat ./zshrc_scripts >> ~/.zshrc - Open

~/.zshrcin a text editor and fill out theUser Entered Variablessection- The paths for Stable Diffusion and the font to use for grid labels need to be changed for your system

- You will need to create the prompt txt files if they do not exist

- Restart your shell or run

source ~/.zshrc

Now you can create a list of prompts in your img or txt prompt file (1 prompt per line like with the standard script).

When you’re ready to generate, run btxt2img or bimg2img /path/to/img.

The samples (and original file for bimg2img) will be saved to its own timestamped directory in the output folder. The new grid will be saved to the same directory as fullgrid.png

For help changing parameters, check the ~/.zshrc file or run either command with the --help argument.

Up Next

In Latent-Diffusion, I created a script to compare RAD with LDM models that I need to remake in Stable-Diffusion. I will probably wait to do this until I figure out how to make my own image databases for RAD. I did find a repository that has an example of doing this, so hopefully I can use that to figure something out.

I also need to rework the grid tools to account for generations of more than 5 or 6 images to split the strips into multiple rows.

Enjoy – Please leave any questions or comments below!